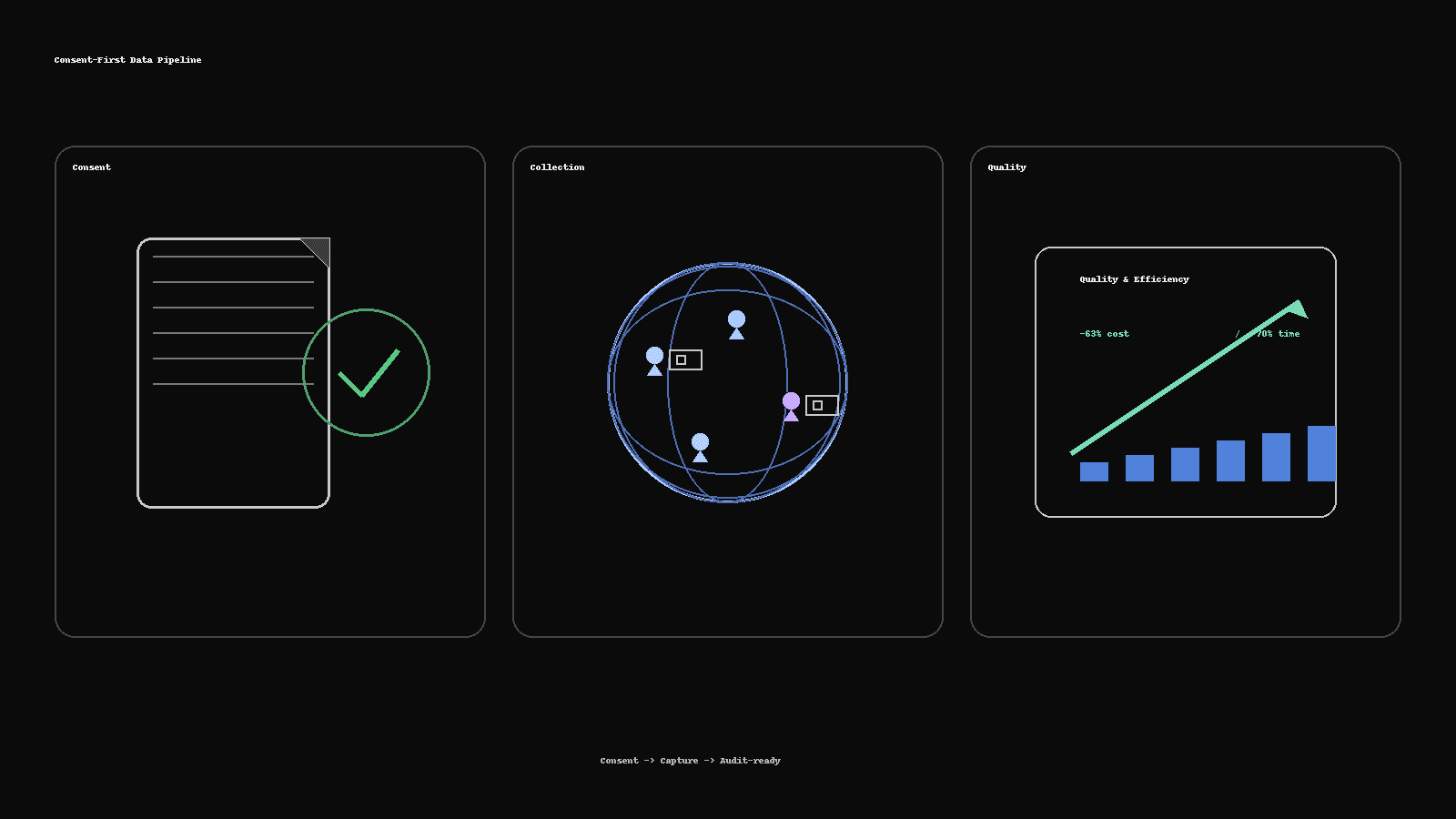

Consent-First Data Collection: How We Delivered 300+ People-Image Sets 63% Cheaper

How Saytica built an audit-ready, real-person image dataset: 300+ participants across six demographic groups, delivered 63% cheaper and 70% faster—using consent kits, vendor routing, QC scorecards, and dedupe pipelines.

Consent-First Data Collection: How We Delivered 300+ People-Image Sets 63% Cheaper

Reading time: ~5 minutes

When DataOceanAI asked for a real-person image dataset—fast, global, and auditable—they’d already tried a vendor and weren’t satisfied. We shipped 300+ participant sets across six demographic groups, cut cost by 63%, and finished 70% faster—without compromising privacy.

Here’s the exact playbook.

1) The brief (and constraints)

Real people, varied lighting/backgrounds and poses

Six demographic groups to balance representation

Tight schedule + budget, audit-ready artifacts

Zero tolerance for unclear consent or messy metadata

2) Consent-first by design

Consent kits (plain language, per locale).

Signed media consent (image capture, storage, usage scope, withdrawal rights)

Language-appropriate versions + short explainer video for collectors

Participant IDs decoupled from PII; only minimal metadata captured

Bystander protection: no bystanders or automatic face-blur for incidental appearances

Audit trail: timestamped consent files + mapping to asset IDs

Why it matters: when consent is clear, rework disappears and legal review is straightforward.

3) Collection playbook that scales

Vendor routing: pre-vetted collectors across regions; parallel lanes

Micro-guide: one-page capture brief with do/don’t examples

Variation grid: indoor/outdoor, front/three-quarter/profile, glasses/masks, neutral/smile

Device diversity: camera phones across common ranges; EXIF retained

Live QC board: reviewers flag issues in real time before batches close

4) Quality you can measure (and prove)

Gold sets: a small reference pack used to calibrate reviewers

IAA targets: inter-annotator agreement goals per attribute

Dedupe: perceptual hashing + Hamming thresholds to remove near-duplicates

Metadata validators: required fields, locale checks, EXIF sanity

Scorecards: error classes (pose, blur, illumination, consent mismatch), with per-vendor dashboards

5) Where the 63% cost / 70% time savings came from

Parallel collection lanes (no serial bottlenecks)

Realtime QC → fix at source, not in post

Automated dedupe + validators → fewer rejections, less re-capture

Clear incentives tied to pass rate and on-time delivery

Consent artifacts once, reused everywhere (no repeat legal back-and-forth)

6) Deliverables

Images: organized by participant ID and split (train/val/test if needed)

Metadata: JSON/CSV (demographics, capture conditions, device, consent link)

Consent pack: signed files + manifest mapping to assets

QA bundle: scorecards, IAA report, dedupe log, known limitations

Docs: data dictionary, file tree, usage notes, withdrawal procedure

7) Recommendations for any people-image project

Start with consent & withdrawal—in local language.

Define a variation grid early; sample before you scale.

Add gold sets and IAA; publish the thresholds.

Automate dedupe & validation; human time goes to edge cases.

Keep a clean audit trail—you’ll thank yourself later.

Tags

More Articles

Explore more from our blog

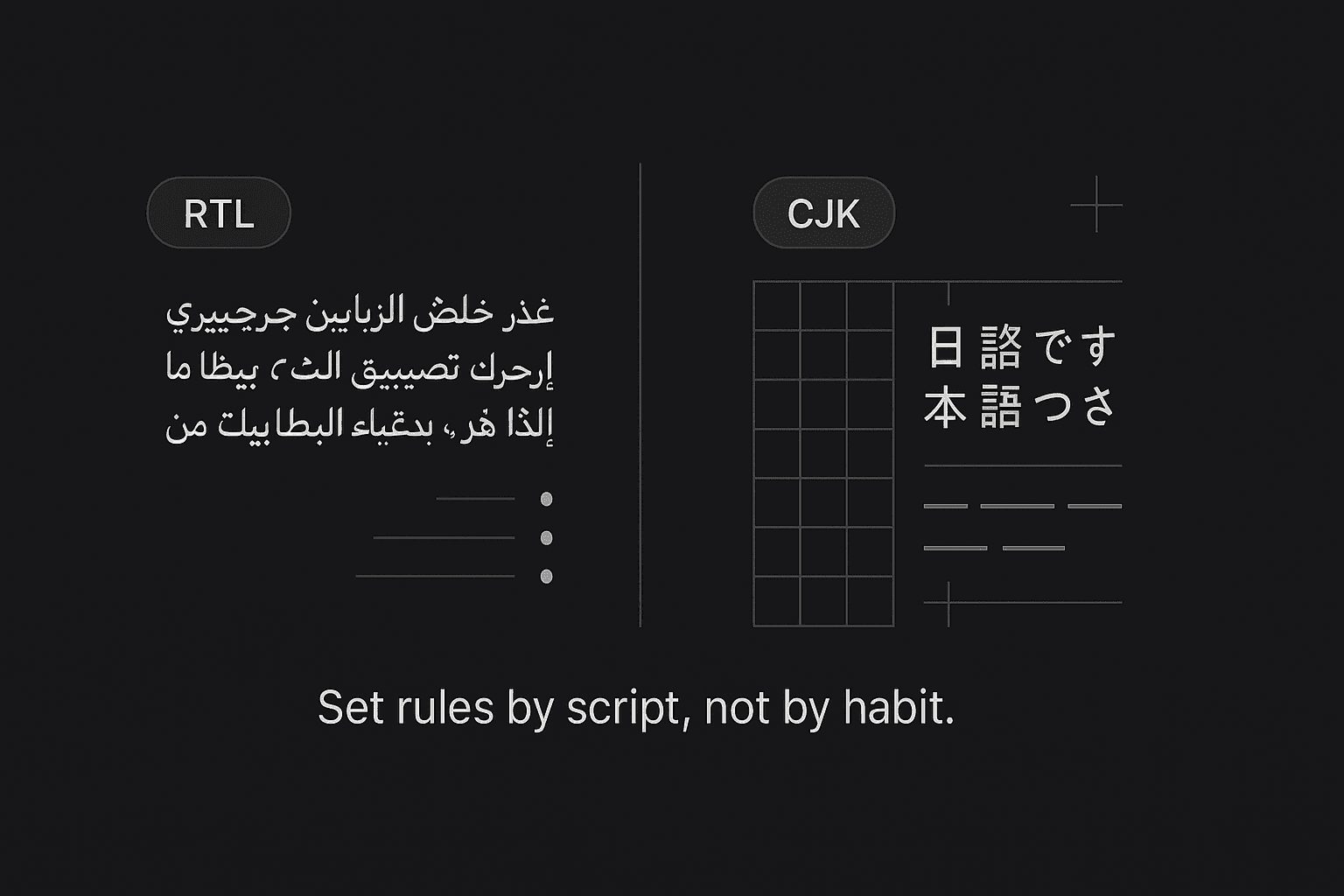

Multilingual DTP Without the Squeeze: RTL/CJK Typography Essentials

Layouts break after translation when RTL and CJK rules aren’t respected. This 5-minute guide covers Arabic/Hebrew (RTL) and Chinese/Japanese/Korean (CJK) essentials, InDesign settings, font choices, and a two-minute preflight checklist.

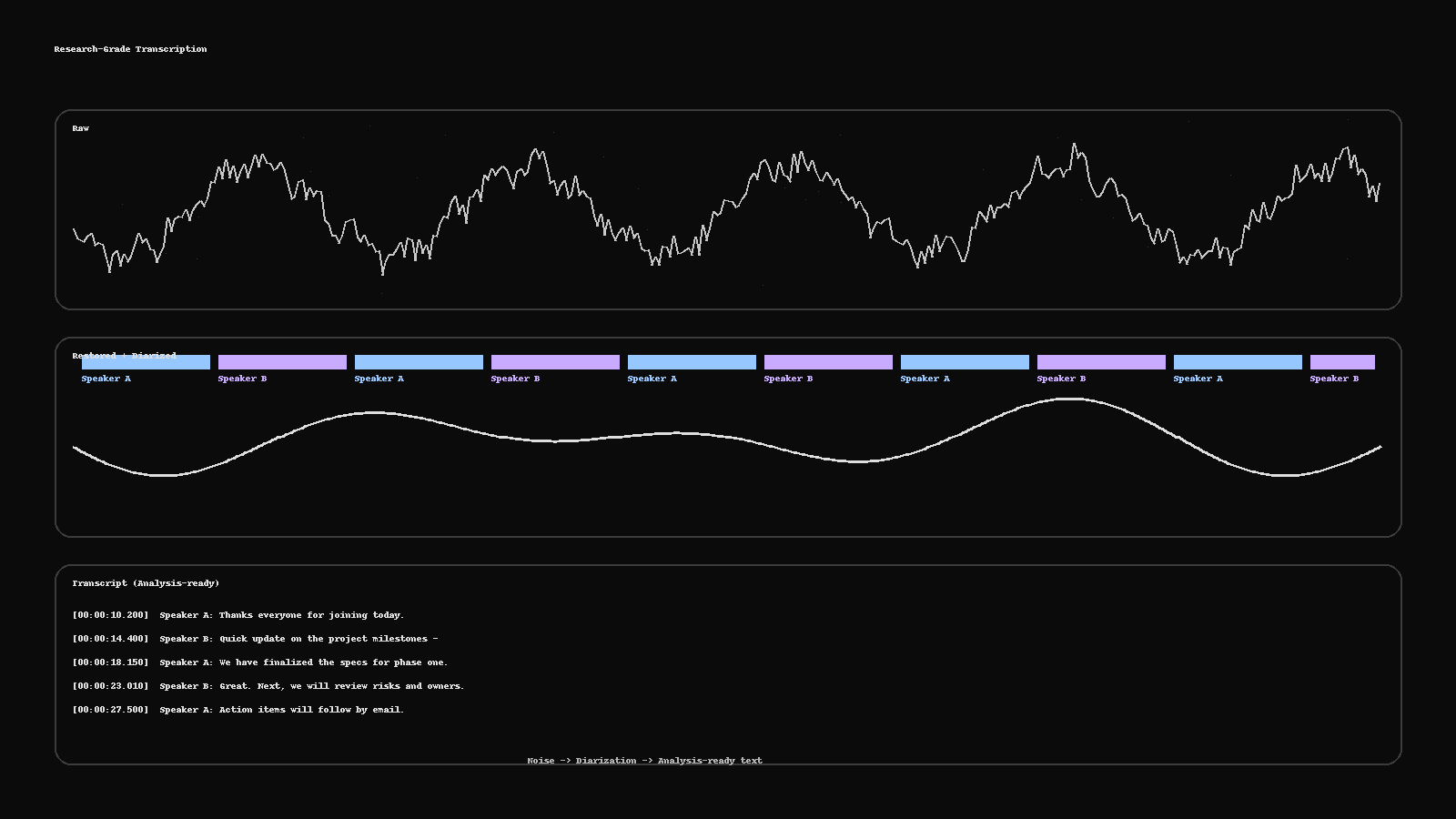

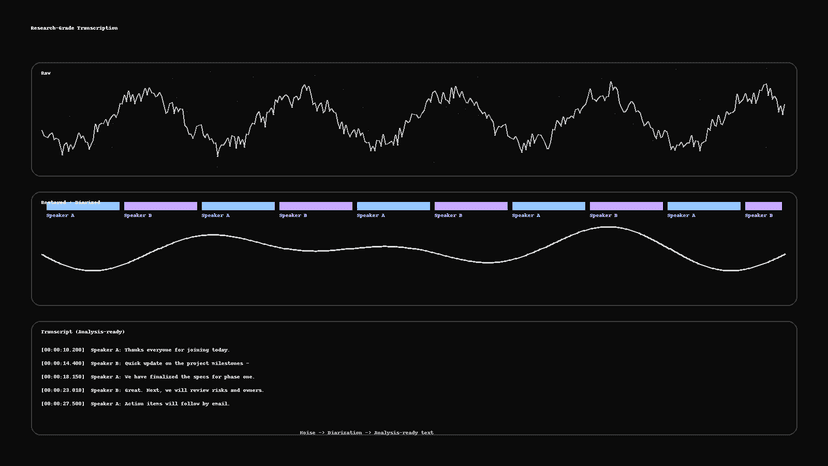

Research-Grade Transcription: From Noisy Audio to Analysis-Ready Text

Turn messy recordings into clean, analysis-ready text. This guide shows a practical pipeline—restoration, diarization, human QC, PII redaction, and deliverables (RTTM, ELAN, TextGrid, SRT)—plus a two-minute checklist to run before publishing.

Dubbing vs Voice-Over vs UN-Style: Pick the Right Voice for Your Market

Not sure whether to dub, use voice-over, or go UN-style? Here’s a fast framework with cost/time differences, when to use each, a casting brief template, and the delivery specs your studio will ask for.

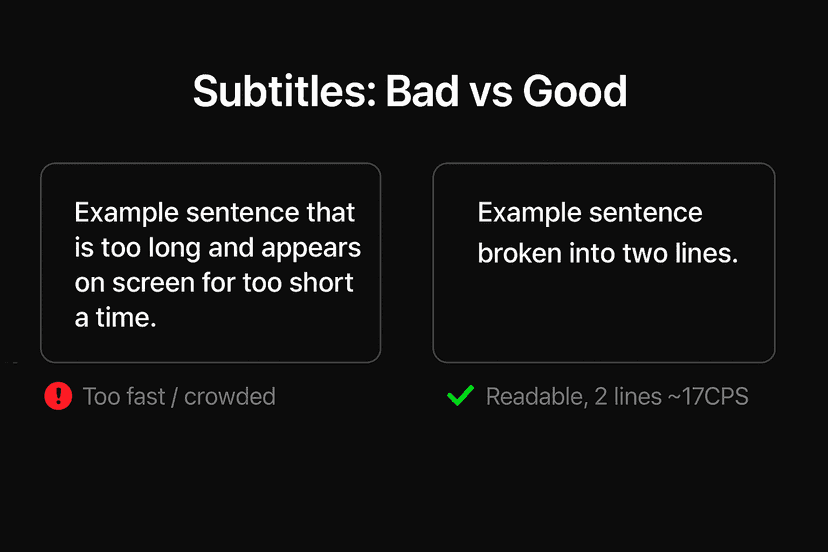

Subtitles That Don’t Feel “Machine”: Read-Speed, SDH & Platform Specs

Why some captions feel robotic—and how to fix them fast. A practical guide to read-speed, SDH vs. standard subtitles, on-screen text, and a simple QC checklist you can run before publish.

The 2025 Localization Playbook: TEP vs MTPE—When to Use Which

Choose the right workflow in 2025. This playbook shows when to use TEP (human translation + edit + proof) and when MTPE makes sense—plus a decision matrix, quality bars, a pilot plan, and risk controls.