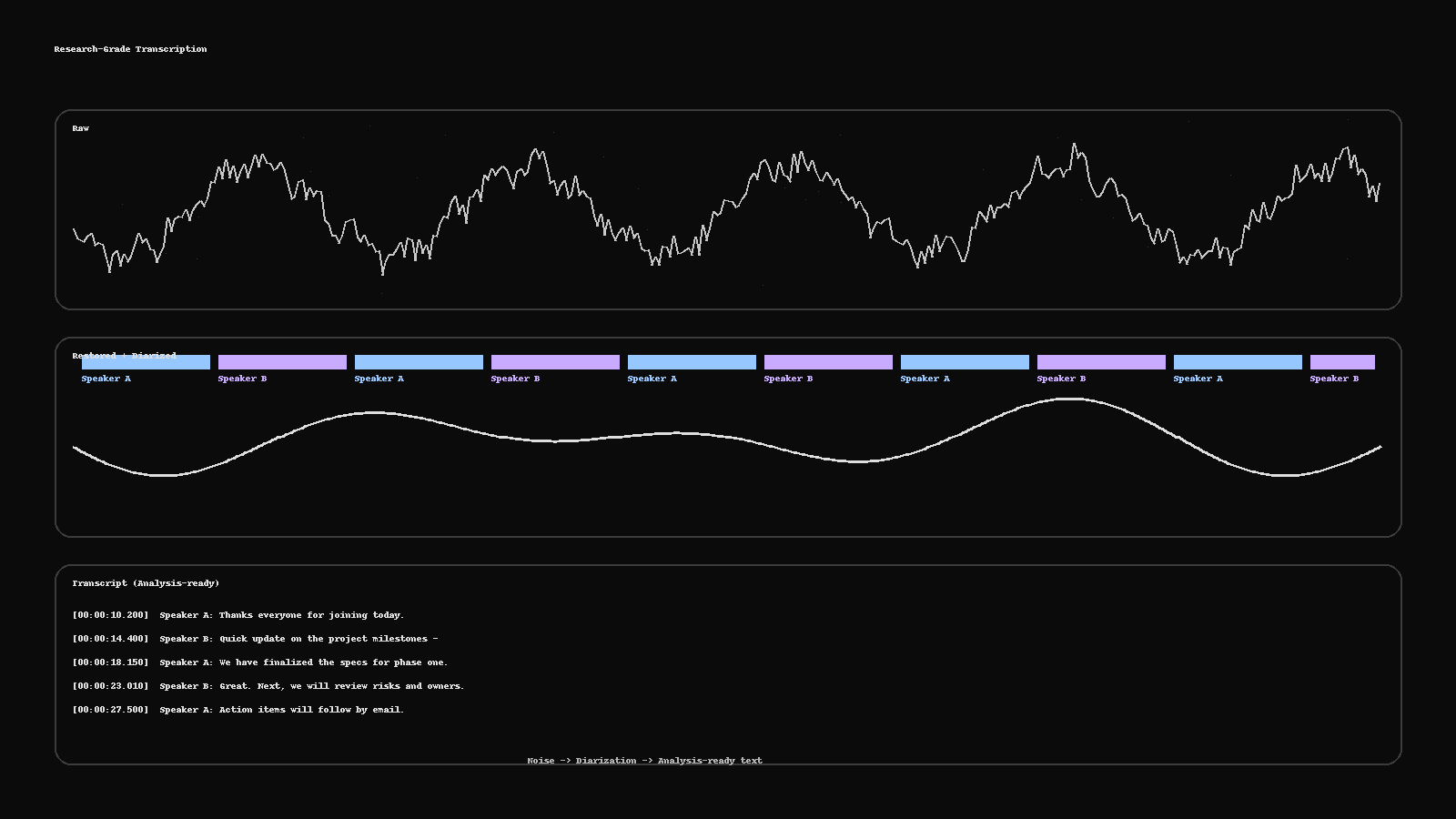

Research-Grade Transcription: From Noisy Audio to Analysis-Ready Text

Turn messy recordings into clean, analysis-ready text. This guide shows a practical pipeline—restoration, diarization, human QC, PII redaction, and deliverables (RTTM, ELAN, TextGrid, SRT)—plus a two-minute checklist to run before publishing.

Research-Grade Transcription: From Noisy Audio to Analysis-Ready Text

Reading time: ~5 minutes

Interviews, clinics, panels, and field recordings rarely arrive studio-clean. Research-grade transcription means you can trust the text: who spoke, when they spoke, and what was said—without exposing sensitive information. Here’s a practical pipeline you can run (or ask us to run) to turn noisy audio → analysis-ready text.

1) Stabilize the audio (fast restoration)

You don’t need to over-engineer this, but basic cleanup improves everything downstream:

Noise/Dereverb: reduce HVAC hum, hiss, room echo.

Leveling: normalize peaks; keep headroom.

Channel sanity: fix inverted stereo, drop dead channels.

Tools often used: iZotope RX, Adobe Audition, Reaper/Audacity plug-ins.

Why it matters: Better SNR → better ASR confidence and fewer human fixes.

2) Diarize first, transcribe second

Diarization splits speech by speaker: Speaker A / Speaker B / Moderator.

Auto-diarize, then human-verify overlaps and switches.

Keep consistent labels across the session (don’t rename A→B mid-call).

Deliverables for researchers often include RTTM, ELAN (.eaf), or Praat TextGrid so you can align text with audio windows.

3) Choose the right transcript style

Verbatim: hesitations, fillers, false starts—best for forensic/clinical.

Clean-read: readable sentences—best for analysis and reports.

Timestamps: per-utterance or fixed intervals (e.g., every 10s).

On-screen text / captions: generate SRT/WebVTT when you need media publishing.

4) Protect privacy (PII-aware workflow)

If recordings include personal data, run PII detection + human verification:

Names, phone numbers, addresses, IDs → redact or mask per policy.

Keep a redaction map (CSV/JSON) that logs what was removed and why.

Use role-based access and encrypted transfer for all files.

5) Human QC that actually moves the needle

Even with strong ASR, humans close the last mile:

Correct domain terminology (medical, legal, product).

Fix diarization errors, crosstalk, and time drift.

Enforce style (numbers, casing, punctuation).

Log errors by class (meaning, speaker split, timing) for QA scorecards.

Target outputs: TXT/DOCX/CSV/JSON transcripts; RTTM/ELAN/TextGrid for time alignment; SRT/WebVTT for captions; optional MP4 preview with burned-in subs for fast review.

6) A 2-minute QC checklist (run before publish)

Timing: no flashes (<1s) or sleepers (>6s); timestamps align with speech.

Diarization: consistent speaker labels; overlaps handled.

Language: no dropped meanings; grammar/spell checks pass.

Terminology: glossary applied; units/doses/figures correct.

PII: redactions complete; redaction map delivered.

Exports: provide DOCX/TXT + researcher format (RTTM/ELAN/TextGrid) + captions if needed.

When ASR is enough—and when it isn’t

Good for: clear single-speaker audio, internal notes, quick comprehension → ASR + light human pass.

Not enough for: multi-speaker, domain-heavy, compliance-sensitive audio → ASR + diarization + human QC + PII redaction.

Tags

More Articles

Explore more from our blog

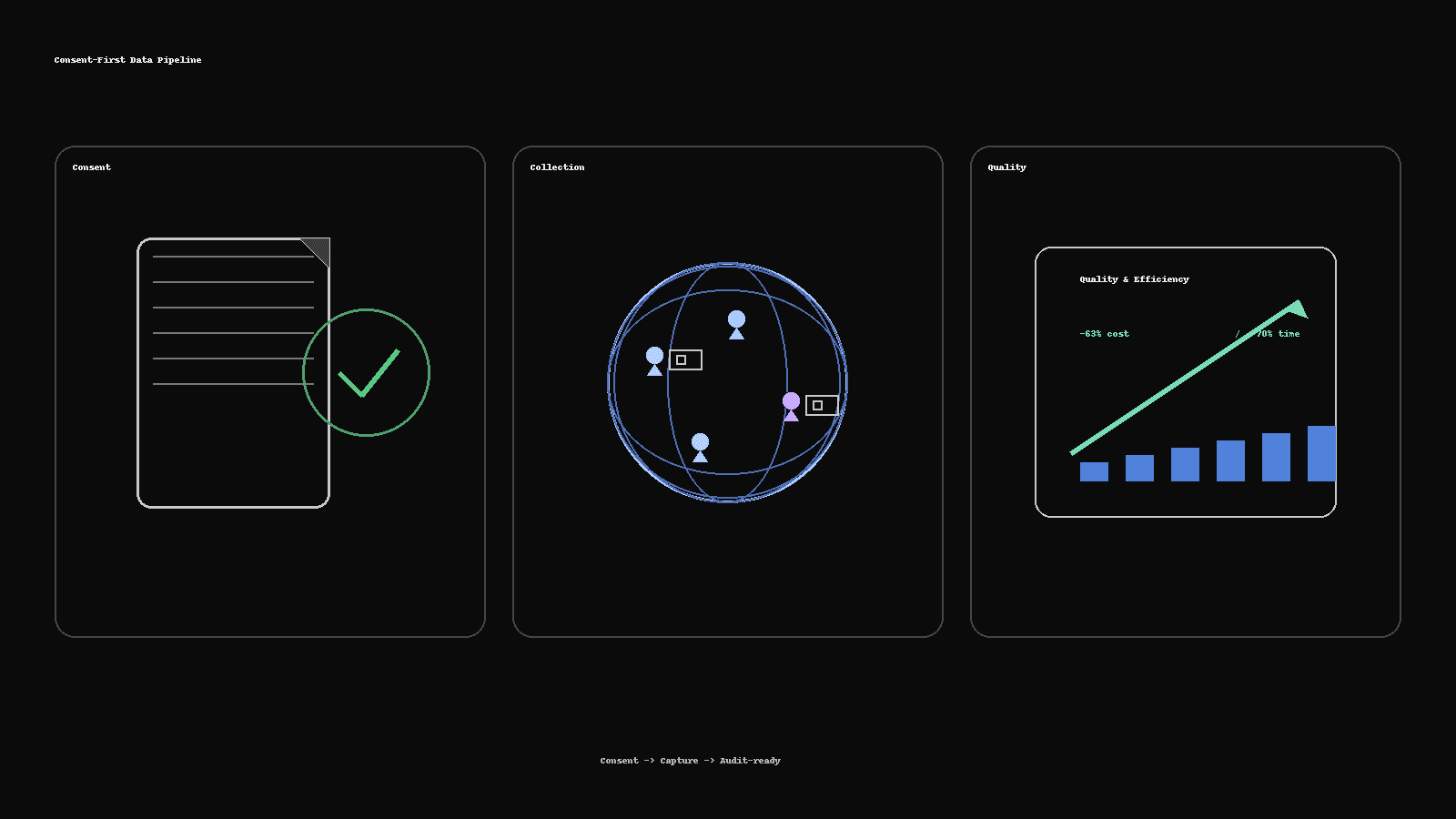

Consent-First Data Collection: How We Delivered 300+ People-Image Sets 63% Cheaper

How Saytica built an audit-ready, real-person image dataset: 300+ participants across six demographic groups, delivered 63% cheaper and 70% faster—using consent kits, vendor routing, QC scorecards, and dedupe pipelines.

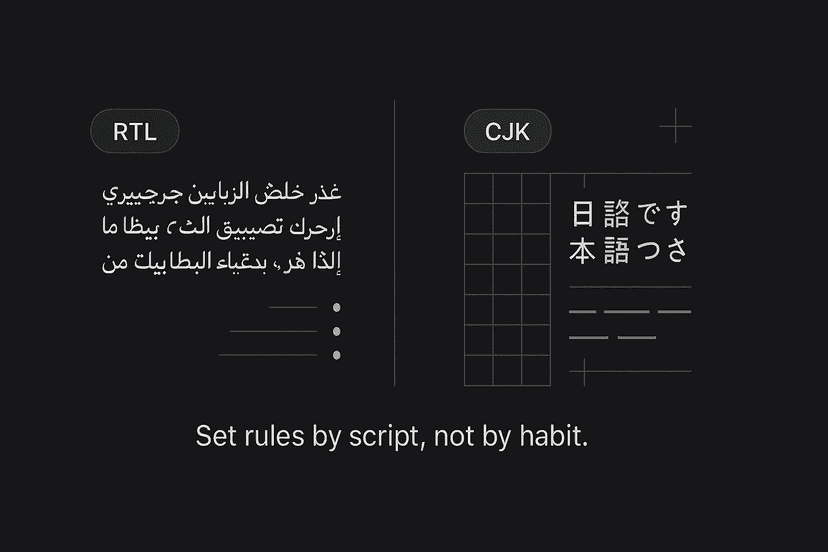

Multilingual DTP Without the Squeeze: RTL/CJK Typography Essentials

Layouts break after translation when RTL and CJK rules aren’t respected. This 5-minute guide covers Arabic/Hebrew (RTL) and Chinese/Japanese/Korean (CJK) essentials, InDesign settings, font choices, and a two-minute preflight checklist.

Dubbing vs Voice-Over vs UN-Style: Pick the Right Voice for Your Market

Not sure whether to dub, use voice-over, or go UN-style? Here’s a fast framework with cost/time differences, when to use each, a casting brief template, and the delivery specs your studio will ask for.

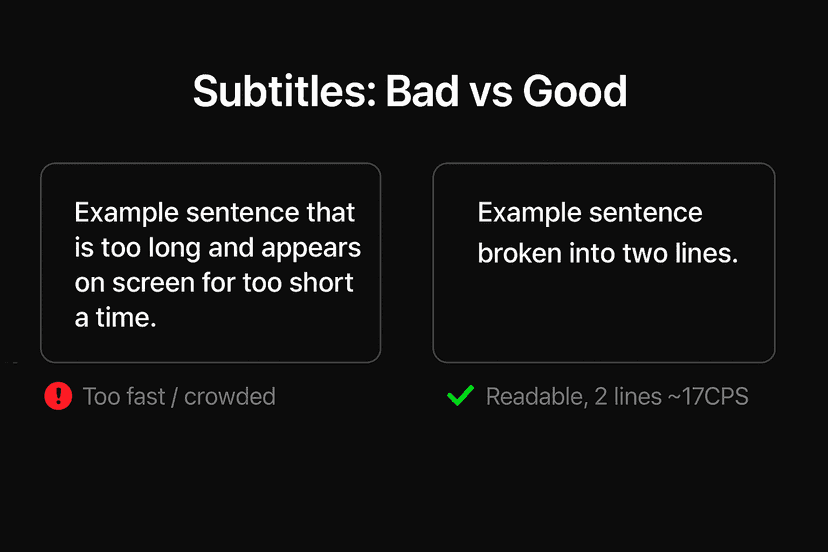

Subtitles That Don’t Feel “Machine”: Read-Speed, SDH & Platform Specs

Why some captions feel robotic—and how to fix them fast. A practical guide to read-speed, SDH vs. standard subtitles, on-screen text, and a simple QC checklist you can run before publish.

The 2025 Localization Playbook: TEP vs MTPE—When to Use Which

Choose the right workflow in 2025. This playbook shows when to use TEP (human translation + edit + proof) and when MTPE makes sense—plus a decision matrix, quality bars, a pilot plan, and risk controls.